The METU Multi-Modal Stereo Datasets are composed of two datasets: (1) The synthetically altered stereo image pairs from the Middlebury Stereo Evaluation Dataset and (2) the visible-infrared image pairs captured from a Kinect device.

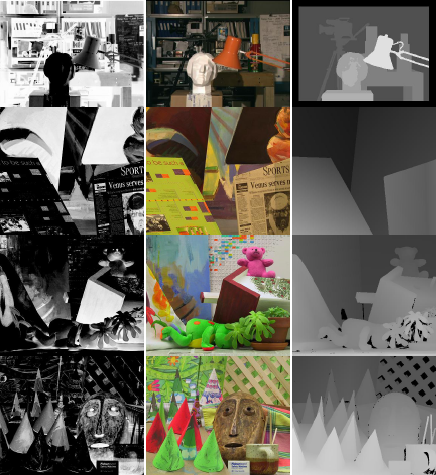

This dataset contains the four popular image pairs (Tsukuba, Venus, Cones and Teddy) in the Middlebury Stereo Evaluation Dataset. The left images in the dataset are altered synthetically by using a cosine transform (cos(I*Pi/255)) of pixel intensities

This dataset enabled to compute the statistics of test results for gaining more knowledge of the performance of the evaluated methods and it became possible for any current or future method to be able to be compared for the result metrics regarding the ones on the evaluation site although they are results for the unimodal image pairs.

Note that, in the left images, important details are lost due to the cosine transformation.

In the experiments, for the statistics computation, the "all" regions provided by the Middlebury page is used by clipping some region towards the left border which actually do not exist in the right image since it is not in the scope of the study to perform any extrapolation for those regions. Besides, the image borders are also excluded by 32 pixels because of the limitations of the similarity measures evaluated. In addition, for the window-based methods half of the used window sizes at the borders are also discarded when computing performance statistics for a fair comparison between methods.

Figure 1: Tsukuba, Venus, Teddy and Cones stereo pairs from the Middlebury Stereo Vision Page - Evaluation Version 2. Left column: Synthetically altered left images. Middle column: The right images. Right column: The ground truth disparities. Note that, in the left image, important details are lost due to the cosine transformation.

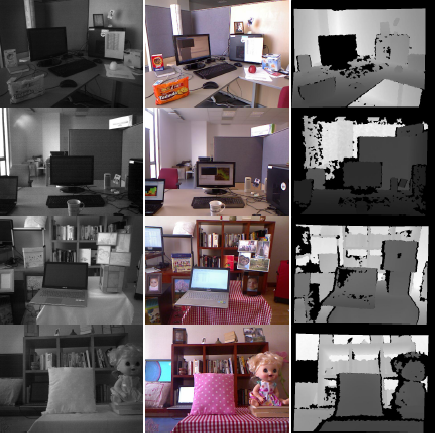

The Kinect dataset contains infrared (left) and visible (right) images captured from a Kinect device. The Kinect Device was introduced by Microsoft for the Xbox 360 game console which enabled the user use his/her own body as the game controller.

The Kinect device (Xbox 360) has a built-in RGB camera and an infrared camera and projector couple. The infrared projector sends beams to the scene and the beams are sensed on the infrared camera which enables the device to generate a 3D depth map of the scene where the intention is the human body.

To be able to use the built-in infrared and visible camera images for multi-modal stereo-vision, it is needed to perform stereo rectification on the two cameras so that epipolar constraint is satisfied. This is accomplished by using RGBDemo software with OpenNI back-end with a set of images including a checker-board of around 50 poses) to find the extrinsic and intrinsic parameters of the IR and RGB cameras. The software uses OpenCv camera calibration and 3D reconstruction (calib3d) module for this purpose.

Using the Kinect device, a stereo vision evaluation dataset composed of infrared and visible image pairs is constructed. For this purpose, several scenes of indoor environments such as office cubicles and living room corners with several objects located in the scene which have different reflectance properties are prepared and recorded by the infrared and visible camera of the device. The images are stereo-rectified and totally 24 image pairs are stored in the dataset.

Figure 2: The image pairs of Dataset #2 - Kinect Dataset Left column: Left (IR) camera images. Middle column: Right (RGB) camera images. Right column: Kinect’s native depth computations.

The datasets can be downloaded using the following link as a RAR file (58MB):

MYamanSKalkan_Multi-Modal_Stereo_Datasets.rar

The synthetically-modified dataset is made available thanks to the the Middlebury Stereo Evaluation Dataset.

Please cite the following paper if you use this dataset: